Perplexity locks paying users out of their accounts until they hand over their phone number. Here is how to escape.

Mandatory SMS verification with no warning, no bypass, and no cancellation option. Combined with a pattern of privacy negligence, it's time to move on.

Just days after we wrote about Anthropic requiring government-issued photo ID to access Claude, another major AI provider has crossed a line that should concern every privacy-conscious user.

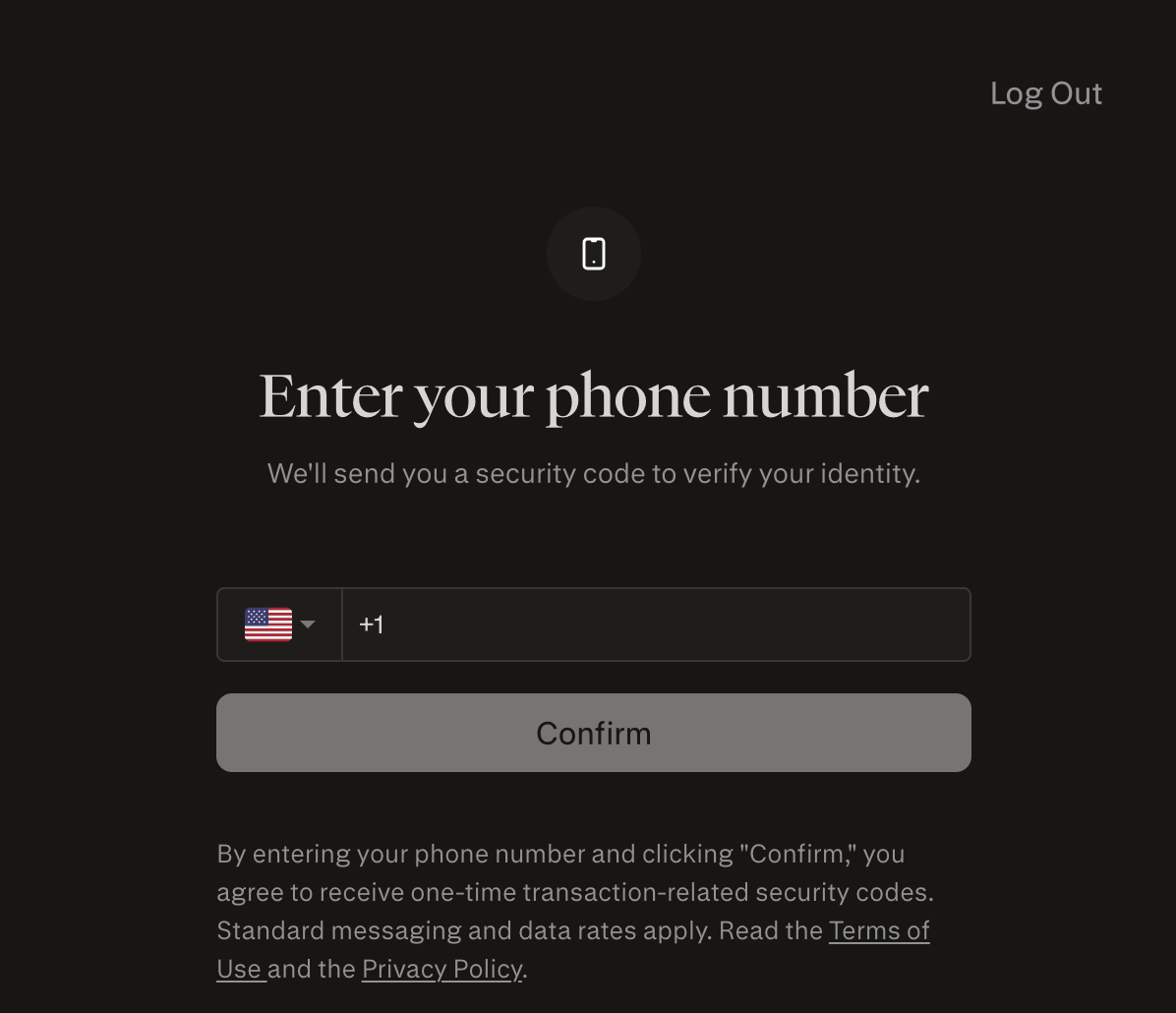

Perplexity AI has rolled out mandatory SMS phone verification for all accounts — including existing paid Pro subscribers — with no prior notice, no grace period, and no way to bypass or postpone it. If you do not hand over your phone number, you are locked out entirely. You cannot use Perplexity. You cannot access your settings. And critically, you cannot cancel your subscription either. You are being charged for a service you can no longer access without complying with a requirement that did not exist when you signed up.

Perplexity's own support (which is ironically also just an AI) confirmed in writing: "Phone verification is a mandatory security requirement for using Perplexity on all platforms. This cannot be bypassed."

The immediate fallout

The user backlash was swift and consistent. A lot of Perplexity users have reached out to us already with the problem. People who signed up with privacy-preserving email aliases found themselves unable to reach support through their accounts. Annual subscribers were locked out with months of paid access remaining. There was no announcement, no alternative verification method, and no refund process initiated by Perplexity.

This is not a minor inconvenience. This is a company retroactively changing the terms of a paid product and holding your subscription hostage until you comply.

Why SMS verification is the wrong kind of "security"

Perplexity is framing this as a security measure. That framing deserves scrutiny.

SMS-based two-factor authentication is well-documented as the weakest form of 2FA available. It is vulnerable to SIM-swapping attacks, SS7 protocol interception, and carrier-level data requests from law enforcement. Linking your real phone number to an AI account that holds your entire search and conversation history does not make you safer. It creates a single point of failure that ties your verified real-world identity to everything you have ever asked.

The companies demanding the most sensitive personal data are doing the least to protect it. Perplexity is a clear example of this pattern.

Perplexity's privacy record: A pattern, not an incident

The phone verification rollout did not happen in a vacuum. It fits a broader pattern of Perplexity treating user privacy as an afterthought — if it is considered at all.

First: They train on your data by default. When you sign up for Perplexity and purchase a subscription, your search queries are automatically used to improve their AI models. Most users never know this. To opt out, you have to navigate to Settings → Preferences → and manually disable "AI Data Retention." Their own description of this setting reads: "AI data retention allows Perplexity to use your search queries to improve its AI models. Turn this setting off if you want your data to be excluded from this process."

Opt-in by default. Buried in settings. No prominent disclosure at signup. This is a deliberate choice.

Second: They likely sell your data. Perplexity operates at a pace that consistently deprioritises security and data governance. The company has moved fast and repeatedly broken user trust in the process. Evidence points strongly toward data being shared with or sold to third-party providers, though Perplexity's public documentation remains deliberately vague on this point.

Third: The hardcoded API key incident. This one illustrates the culture of the company better than any policy statement could.

Perplexity is using a third-party favicon service called logo.dev. They hardcoded their API key directly into image source tags — visible to anyone who opened their browser's developer tools. Developers noticed quickly and started using the exposed key for their own projects, running up charges on Perplexity's account. It took Perplexity two months to figure out why their logo.dev request volume was so high.

The problem? It's still not fixed.

A recent check by us found that the hardcoded key still exists and has not been fully removed across their website. You can verify this yourself — paste the following directly into your browser address bar: https://img.logo.dev/wikipedia.org?token=pk_NNp9abu9TMm9II6Z0666YA (Still not fixed as of 6th May 2026)

That URL loads a Wikipedia favicon using Perplexity's own API token. Anyone can use it. It still works.

A company that cannot protect its own API keys and integrates them publicly in frontend running code is not a company you should trust with your search history, your chats, your thoughts, your identity, or your phone number.

The bigger picture: A pattern across the industry

This is now a clear and accelerating pattern.

Claude requires a government-issued photo ID. Perplexity requires your phone number. Both can link your verified real identity to your AI conversation history. Once that link exists, it can be subpoenaed, breached, sold, or handed to law enforcement at any time. Just like the phone number verification example here, Perplexity can change their terms at any time and do whatever they want with your data. Unless you really care about privacy, you will comply anyway to get back access. Otherwise, you need to devise an exit strategy from the surveillance system now.

The AI companies that started as tools are increasingly becoming gatekeepers that demand your identity before letting you through the door. Every week, the list of AI services you can use without proving who you are gets shorter.

How to solve the problem and get out of Perplexity

If you are currently locked out or want to leave before things get worse, here is what to do.

If you can still access the mobile app without updating it: Do so immediately. Cancel your subscription from within the app before it forces the verification prompt.

To delete your data: Submit a request through the dedicated form at perplexity.typeform.com/datarequest and select "erase my personal data". Perplexity support says that this form works even without account access.

If you cannot access anything: Email support@perplexity.ai directly. State clearly that you cannot access your account due to the mandatory verification requirement, that you did not consent to this condition at the time of subscription, and that you are requesting both a refund and full account deletion under your GDPR Article 17 right to erasure. Keep this email as evidence.

If they do not respond within 30 days: Escalate to your national data protection authority. In the EU, this is a straightforward process.

One important note on data deletion: Perplexity states that data is permanently deleted 30 days after a request is processed. Whether your conversation history, search queries, and inferred behavioural profiles are genuinely purged from training datasets, backup systems, and third-party data arrangements is a separate question — one that no major AI company has given a credible, verifiable answer to. The data you shared is worth a great deal. We have serious doubts that everything will be deleted, especially if it is already circulating in training datasets.

Making the switch simple

To make the transition away from Perplexity as frictionless as possible, we set up perplexity.lu to help users move quickly while protecting their privacy.

The switch itself is simple: replace the .ai ending in the Perplexity URL that you are used to with .lu and you are done.

No phone number. No identity check. No surveillance infrastructure attached to your searches. Made in the EU (Luxembourg), fully European data sovereign, and built to protect your privacy from the ground up.

Say hello to your new privacy-first AI assistant: www.perplexity.lu

It redirects you to xPrivo.com — the open-source, privacy-first European alternative to Perplexity.

What comes next

The window in which AI can be used privately — without a passport, without a phone number, without a permanent link between your identity and your thoughts — is narrowing. Not because of a legal requirement. Because the large providers have decided they want it that way.

You do not have to accept this.

xPrivo is available at xprivo.com — no account, no ID, no tracking required. It can be used directly in the browser or downloaded and run entirely on your own hardware with local models, so no cloud provider ever sees what you ask.

The tools for anonymous, sovereign AI already exist. The question is whether enough people choose to use them before verification becomes the unavoidable default across the entire industry.